Part 3: Operationalizing Trust – Designing and Managing Human-AI Collaboration and Agentic System Trust

With a clear understanding of agentic AI and the foundational pillars required for its adoption, the crucial next step is to operationalize these concepts within your organization. This final part of our series will focus on concrete strategies for designing and managing Human-AI Collaboration and cultivating Agentic System Trust, ensuring that your autonomous AI initiatives deliver maximum value responsibly and sustainably. It’s about establishing practical frameworks and best practices that integrate AI seamlessly into your workforce, not as a replacement, but as an indispensable partner.

Designing for Seamless Human-AI Collaboration

Effective human-AI collaboration isn’t accidental; it’s meticulously designed. It requires a thoughtful approach to workflow, communication, and mutual learning. The goal is to maximize the complementary strengths of both humans and AI, creating synergistic outcomes that neither could achieve alone.

- Define Clear Roles and Responsibilities:

- AI’s Role: Assign AI agents tasks that leverage their strengths: high-speed data processing, pattern recognition, repetitive execution, and objective analysis. These are often tasks that are tedious or impossible for humans due to scale or speed.

- Human’s Role: Emphasize human strengths: critical thinking, complex problem-solving, creativity, emotional intelligence, strategic judgment, ethical reasoning, and adapting to unforeseen circumstances. Humans become the “orchestrators,” “overseers,” and “innovators” in an AI-augmented environment.

- Decision Points: Clearly delineate where AI makes autonomous decisions, where it provides recommendations for human review, and where it explicitly requires human intervention or approval. This creates predictable interactions and prevents “automation complacency” where humans blindly trust the system (World Economic Forum, 2025).

- Implement Intuitive Human-AI Interfaces:

- Explainable AI (XAI): Design interfaces that provide transparency into AI’s reasoning. This means showing how an agent arrived at a particular conclusion or action. Visualizations, natural language explanations, and confidence scores can help users understand the AI’s “thought process.” Tools like IBM Watson OpenScale or Google Cloud Explainable AI can be instrumental here.

- Feedback Mechanisms: Create easy-to-use channels for humans to provide feedback on AI performance, correct errors, and offer new data or insights. This continuous feedback loop is vital for an agent’s improvement and for building user confidence.

- Control and Override Options: Empower users with the ability to pause, adjust, or override agent actions when necessary. This maintains human agency and ensures accountability, especially in high-stakes scenarios.

- User-Centric Design: Involve end-users in the design and development process of AI-powered tools. Their insights into workflows and pain points are invaluable for creating practical and well-adopted solutions (Medium, Jamie Rothwell, 2025).

- Foster a Culture of AI Literacy and Continuous Learning:

- Targeted Training: Provide structured training programs that go beyond technical skills. Focus on prompt engineering, understanding AI limitations, interpreting AI outputs, and ethical considerations. The Capgemini Research Institute (July 2025) highlights that only 24% of employees have received AI training, despite high concerns about job impact. Bridging this gap is critical.

- Psychological Safety: Create an environment where employees feel comfortable experimenting with AI, asking questions, and even reporting errors without fear of judgment. Encourage a growth mindset around technological change.

- Pilot Programs & Small Wins: Start with small, measurable AI initiatives to demonstrate immediate value and build confidence. Show employees how AI can save time on administrative tasks or boost specific metrics, easing resistance and fostering adoption.

Cultivating Agentic System Trust: A Multi-faceted Approach

Trust in agentic AI is earned through consistent, reliable, transparent, and ethical performance. It’s about demonstrating that these autonomous systems are not only capable but also responsible and aligned with human values.

- Transparency and Explainability:

- Algorithmic Transparency Scores: Implement quantifiable measures that show the interpretability of an AI system. This can provide clear insights into the variables influencing AI decisions (DigitalDefynd, 2025).

- Auditability: Ensure all agent actions, decisions, and data interactions are logged and auditable. This provides a clear trail for troubleshooting, compliance checks, and post-incident analysis.

- Contextual Explanations: Beyond “how it works,” provide explanations for why an agent took a specific action in a given context. This helps humans understand the underlying rationale and builds deeper trust.

- Robust Governance and Ethical AI Frameworks:

- Dedicated AI Ethics Committee/Board: Establish a cross-functional body responsible for defining, overseeing, and enforcing ethical guidelines for AI development and deployment.

- Bias Detection & Mitigation: Integrate tools and processes to continuously monitor AI models for bias in data and outputs. Proactively train systems with diverse datasets to minimize perpetuating existing societal inequalities (DigitalDefynd, 2025).

- Compliance by Design: Embed regulatory compliance (e.g., GDPR, EU AI Act, NIST AI RMF) into the entire AI lifecycle, from data collection to model deployment and monitoring. This includes clear policies on data privacy, consent, and usage.

- Accountability Frameworks: Clearly define who is responsible for AI outcomes—from data scientists and developers to business owners and C-suite executives. This ensures clear ownership and mechanisms for redress when issues arise.

- Incident Response Plans: Develop detailed playbooks for responding to AI failures, security breaches, or unexpected agent behaviors. This includes immediate containment, investigation, mitigation, and communication protocols.

- Continuous Monitoring and Validation:

- Multi-Layered Monitoring: Implement comprehensive monitoring systems that track agent behavior, performance metrics, and compliance with policies in real time (Lumenova AI, 2025). This includes:

- Behavioral Monitoring: Flagging unexpected actions or deviations from intended goals.

- Real-time Anomaly Detection: Identifying unusual patterns that might indicate errors or malicious activity.

- Policy Enforcement: Automated checks to ensure agents operate within defined guardrails and permissions.

- Drift Detection: Continuously monitor for “model drift” (where AI performance degrades over time due to changes in data distribution) and “concept drift” (where the relationship between input and output changes).

- Human-in-the-Loop Oversight Dashboards: Create centralized dashboards where human operators can monitor agent activity, review flagged events, approve or intervene in high-risk situations, and provide feedback at scale. Dynamic risk assessment can escalate only high-risk interactions to avoid reviewer fatigue (Lumenova AI, 2025).

- User Feedback Loops: Actively solicit and integrate user feedback on agent performance. This quantitative and qualitative data is crucial for continuous improvement and for measuring user trust and satisfaction.

- Multi-Layered Monitoring: Implement comprehensive monitoring systems that track agent behavior, performance metrics, and compliance with policies in real time (Lumenova AI, 2025). This includes:

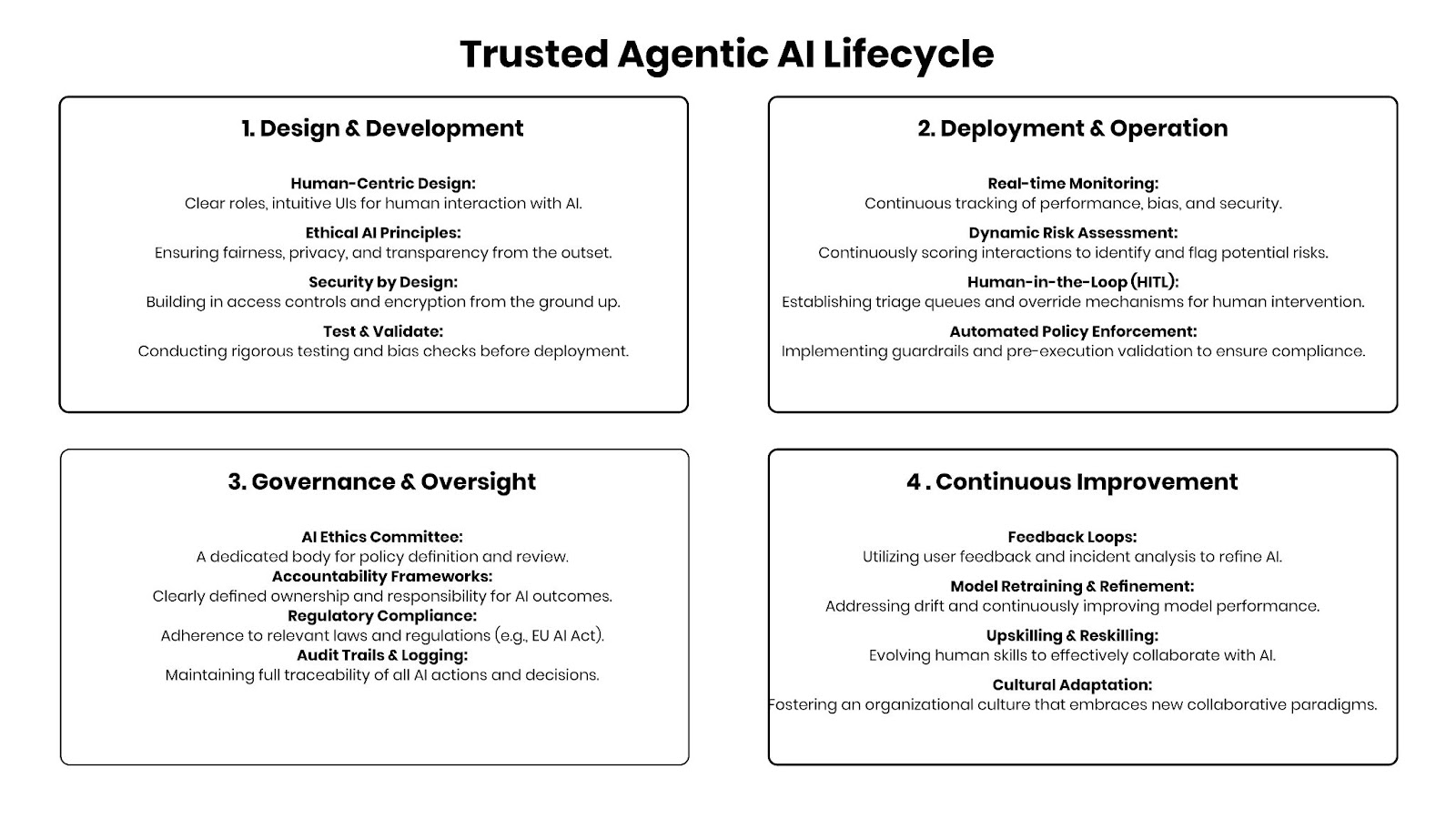

Framework for Managing Agentic System Trust (Conceptual)

To consolidate these strategies, consider a framework structured around the lifecycle of an agentic system:

Conclusion: The Future is Collaborative and Trustworthy

The journey to harness agentic AI is not about turning over the reins entirely to machines. It’s about a sophisticated dance between human ingenuity and artificial intelligence, built on a foundation of mutual understanding, clear boundaries, and unwavering trust. By proactively investing in the people, processes, technology, and data required to support this collaboration, and by implementing robust governance and continuous monitoring, organizations can unlock unprecedented value from their AI initiatives. This is the path to truly intelligent enterprises – where humans and AI don’t just coexist, but genuinely thrive together, driving innovation and shaping a more efficient, ethical, and productive future.