From AI Fascination to Operational Reality

After reading through hundreds of reflections, takeaways, speaker notes, and attendee discussions following Data Innovation Summit 2026 in Stockholm, one thing became increasingly clear: the enterprise AI conversation has fundamentally changed. What stood out across the event was not another cycle of exaggerated claims about models replacing entire professions overnight, nor an obsession with benchmarks, GPUs, or which vendor had announced the latest assistant. Instead, a much more mature and operational discussion emerged from practitioners, architects, executives, engineers, governance leaders, and transformation teams trying to solve a far more difficult challenge: how to make AI actually work inside the complexity of real organizations.

For several years, enterprise AI conversations largely revolved around capability. Bigger models, faster inference, more automation, better prompts, larger context windows, and increasingly impressive demonstrations dominated the narrative. Yet across the three days of the summit, and especially throughout the reflections shared afterward, another signal appeared far more consistently than discussions about raw model performance. The dominant theme was context. Semantic layers, ontology engineering, metadata, governance, ownership, lineage, trusted business definitions, operational workflows, and organizational structure appeared repeatedly across independent attendee reflections from completely different industries and professional backgrounds. That consistency is important because it suggests the market is converging around a new realization: enterprise AI systems are only as useful as the operational and organizational context surrounding them.

This shift matters because it signals that enterprise AI is finally moving from experimentation into operational reality. Operational environments are fundamentally less forgiving than innovation labs or product demos because they expose every weakness hidden underneath the presentation layer. Several attendees captured this perfectly in their reflections, repeatedly emphasizing that AI does not remove the importance of strong foundations but instead amplifies both strengths and weaknesses already present within organizations. Weak governance becomes more dangerous. Poor ownership becomes more visible. Fragmented workflows create even more friction. Inconsistent business definitions spread confusion faster. Bad data quality compounds rapidly once agents and automated systems begin operating at scale.

The Rise of Context-Aware Enterprise AI

One of the strongest recurring themes throughout the summit was the growing importance of semantic layers and contextual architectures. What only a few years ago was considered a relatively specialized analytics topic is rapidly evolving into one of the most strategic discussions in enterprise AI. Across multiple talks and attendee reflections, semantic layers were increasingly framed not simply as business intelligence infrastructure but as the operational bridge between enterprise data and AI systems. The market is beginning to understand that large language models alone are not sufficient to generate reliable enterprise outcomes. Models require context, meaning, constraints, definitions, ownership structures, and trusted operational signals to function effectively inside real organizations.

This perspective appeared repeatedly across discussions surrounding conversational analytics, AI agents, ontology engineering, and contextual retrieval systems. Many attendees independently arrived at similar conclusions despite attending completely different tracks and sessions. Some described the shift as “context-aware AI,” while others referred to “knowledge layers,” “semantic architectures,” or “business meaning systems.” Regardless of terminology, the direction was remarkably consistent. The industry is increasingly realizing that the future competitive moat may not simply be access to AI models, since those are rapidly commoditizing, but rather the ability to structure, contextualize, operationalize, and govern organizational knowledge in ways that AI systems can consume safely and effectively.

Joe Reis ’ Discussions around Mixed Model Arts strongly reinforced this broader movement back toward contextual thinking and foundational rigor. Several attendees referenced his reminder that “fundamentals are gravity,” echoing Bill Inmon’s long-standing perspective that strong data foundations remain unavoidable regardless of technological trends. That observation resonated deeply because many organizations are now discovering that AI systems cannot compensate for years of fragmented architectures, unclear ownership, undocumented logic, and inconsistent data practices. In many ways, AI is not replacing foundational data management principles but forcing enterprises to take them far more seriously than before.

AI Agents Are Forcing a Structural Reset

The rise of AI agents represented another dominant theme throughout the summit, yet what stood out most was the surprisingly mature tone surrounding the discussion. Unlike earlier waves of generative AI enthusiasm that often focused on novelty and automation theater, conversations around agents at DIS 2026 were noticeably more operational and grounded. Attendees repeatedly discussed orchestration, workflow integration, permissions, governance, memory, retrieval boundaries, accountability, observability, and operational trust. The industry appears to be moving beyond the question of whether agents are possible and toward the far more important question of how organizations can safely integrate them into production environments without creating operational chaos.

Stephen Brobst offered one of the clearest frameworks for understanding this progression by describing the evolution of agents across three phases: systems that advise humans, systems that execute tasks on behalf of humans, and eventually systems capable of autonomously interacting with other systems and agents. What made this perspective particularly compelling was not the futurism itself but the operational implications underneath it. Once agents begin executing actions across enterprise systems, context, permissions, governance, lineage, and trust become exponentially more important. Organizations quickly realize that an autonomous system operating on poorly governed data can create problems at machine speed.

This concern around governance surfaced repeatedly throughout the summit, although notably in a very different tone than many previous industry conversations. Governance was no longer framed primarily as bureaucratic overhead slowing innovation. Instead, governance increasingly appeared as the mechanism enabling trust, scalability, operational reliability, and enterprise adoption. This represented one of the clearest signs of market maturity visible across the attendee reflections. AI governance discussions moved beyond theoretical ethics debates and into highly operational territory involving AI inventory systems, transparency, explainability, ownership structures, regulatory readiness, privacy-by-design, secure architectures, and organizational accountability.

Governance Is Becoming Trust Infrastructure

Davide Pigozzi s discussions around AI inventory and system visibility resonated strongly because they highlighted an uncomfortable truth many enterprises are now facing: organizations cannot govern systems they cannot even fully identify or observe. Similarly, Annika Thoresson from Sveriges Riksbank demonstrated how cross-functional governance structures become essential once AI systems begin interacting with regulated operational environments. Rather than treating governance as a compliance checkbox, many organizations are beginning to understand governance as operational infrastructure for trustworthy AI adoption.

Several speakers also reinforced that organizational transformation remains far more difficult than technical deployment. Henrik Göthberg ’s recurring themes across all three days perhaps captured this most effectively by continually returning the discussion toward workflows, ownership, operational friction, and organizational steering. While much of the broader market remains heavily focused on tools and models, Henrik repeatedly emphasized that “hand-overs don’t sum to change” and that fragmentation creates friction throughout organizations. His framing around Product-To-Be-Employed management, operational ownership, and steering-by-quorums rather than traditional committee structures clearly resonated with attendees because it addressed one of the deepest underlying problems enterprises face today: AI exposes organizational bottlenecks more aggressively than technological bottlenecks.

This broader organizational discussion became one of the most important undercurrents throughout the summit. Many attendee reflections independently arrived at similar conclusions that the next enterprise AI challenge is not purely technical capability but organizational capability. Companies are increasingly discovering that deploying new tools does not automatically produce transformation. Success depends on workflow integration, incentive alignment, operational ownership, employee adoption, cultural readiness, governance clarity, and leadership structures capable of supporting continuous change. AI is accelerating the need for organizations to redesign not only systems but also the ways teams collaborate, make decisions, and operationalize knowledge.

Change Management, and Why Business Must Reclaim Innovation Ownership

One of the most important signals emerging from DIS 2026 was not technological at all. Beneath all the discussions around agents, semantic layers, governance, and modern architectures, another theme continuously resurfaced across talks, roundtables, and attendee reflections: enterprise AI transformation is fundamentally a leadership and organizational challenge before it becomes a technical one.

This became especially visible through recurring narrative across all three days of the summit, where the focus repeatedly returned to operational flow, ownership, steering, and organizational fragmentation. While much of the industry still tends to frame AI transformation as a tooling exercise delegated primarily to technology departments, the discussions at DIS 2026 pointed toward a different conclusion entirely. Business innovation can no longer be outsourced to technical teams alone because the real barriers are increasingly embedded inside workflows, incentives, decision structures, operational ownership, and organizational culture.

Many organizations still approach AI transformation through a traditional technology delivery mindset. The business identifies a need, technology teams deploy tools, dashboards, copilots, or AI systems, and leadership expects transformation to naturally follow implementation. Yet the overwhelming signal from practitioners at the summit was that deployment does not equal adoption, and adoption does not equal transformation. Several attendees independently reflected on the same frustration: organizations continue investing heavily in AI capabilities while struggling to fundamentally change how decisions are made, how workflows operate, and how people actually work on a daily basis.

This is precisely why Henrik Göthberg’s emphasis on “Product-To-Be-Employed” management resonated so strongly throughout the summit. The framing shifts the focus away from shipping technical products and toward enabling operational ways of working that people can continuously adopt, evolve, govern, and improve over time. Technology itself does not create transformation. People operationalizing technology inside real business environments create transformation. Without ownership from the business side, many AI initiatives remain trapped as isolated experiments disconnected from operational reality.

Several attendees echoed this perspective through different lenses. Some discussed the importance of “new-doers,” referring to individuals empowered to challenge and redesign operational practices rather than simply automate existing inefficiencies. Others highlighted that organizations often underestimate the human friction surrounding AI adoption, including fear, unclear incentives, fragmented accountability, skills gaps, and the absence of operational ownership. What became increasingly clear throughout the summit is that AI is exposing organizational weaknesses far more aggressively than technical weaknesses.

This explains why leadership emerged as such a critical undercurrent across the event. The organizations making meaningful progress with AI are rarely the ones simply purchasing the most advanced tools. Instead, they are often the organizations capable of aligning leadership, governance, workflows, incentives, and operational accountability around a shared transformation agenda. Several speakers and attendees independently reinforced the idea that successful AI transformation requires steering rather than traditional management. Steering implies continuous adaptation, clear ownership, dynamic prioritization, and the ability to navigate uncertainty collaboratively rather than relying on rigid project structures designed for predictable environments.

The repeated discussions around fragmentation were especially important because they revealed a growing realization that modern enterprises are often structurally misaligned for AI-driven transformation. Fragmented ownership creates fragmented context. Fragmented workflows create operational friction. Fragmented incentives slow adoption. Fragmented governance creates trust gaps. AI systems merely expose these structural problems faster because they require organizations to formalize decisions, definitions, permissions, workflows, and accountability in ways that many enterprises have historically avoided.

This also explains why so many attendees emphasized that the future of enterprise AI depends heavily on human capabilities such as judgment, leadership, adaptability, collaboration, and business understanding. The summit repeatedly challenged the simplistic narrative that AI transformation is primarily about replacing human labor. Instead, the dominant signal pointed toward augmentation, orchestration, and operational redesign. Human expertise is not disappearing. It is moving higher into interpretation, contextualization, governance, prioritization, and decision-making.

Several attendee reflections captured this shift particularly well by emphasizing that the future role of professionals is increasingly evolving from execution toward judgment. Analysts are becoming context builders rather than dashboard operators. Leaders are becoming organizational orchestrators rather than project approvers. Business teams are increasingly expected to actively shape innovation instead of passively consuming technical outputs delivered by IT departments. This may ultimately become one of the biggest organizational shifts triggered by the AI era.

The broader implication is profound. For decades, many enterprises treated innovation as something largely delegated to technology functions while business units focused primarily on operations, targets, and execution. AI is beginning to collapse that separation because the most valuable AI opportunities are deeply embedded inside operational workflows, customer interactions, decision structures, and business processes. Technology teams cannot redesign those realities in isolation. Business ownership therefore becomes essential.

This is why one of the strongest strategic signals from DIS 2026 was the movement from technology-first transformation toward business-first transformation. Organizations are increasingly realizing that AI is not merely another enterprise software category. It is an operational capability that reshapes how decisions are made, how workflows function, how knowledge moves, and how organizations create value. Such transformation cannot succeed without active leadership involvement, cross-functional alignment, and business accountability.

Leadership, Openness, and the Human Foundations of Innovation

Another important dimension that surfaced repeatedly throughout the summit was the relationship between innovation, leadership, openness, and societal culture. Interestingly, some of the strongest reflections attendees shared after the event were not necessarily about technology itself, but about the human conditions required for innovation to flourish in the first place.

Several attendees specifically referenced former Swedish Prime Minister Fredrik Reinfeldt’s keynote, where he spoke about humility, openness, curiosity, collaboration, and the importance of building environments capable of attracting highly skilled people from around the world. While his remarks were not framed purely as a technology discussion, they connected deeply with many of the broader themes emerging across DIS 2026. In an era increasingly dominated by AI acceleration, geopolitical fragmentation, and technological disruption, his message served as a reminder that innovation ecosystems are ultimately built by people, culture, trust, and long-term societal thinking.

One attendee summarized one of the strongest takeaways from the keynote through a simple but powerful observation:

“The harvest is plentiful, but the workers are few.”

The meaning resonated throughout many conversations during the summit because it captured the growing gap between ideas and implementation. Organizations today are not suffering from a lack of AI ambition. They are suffering from a shortage of operational capability, leadership readiness, organizational adaptability, and people empowered to actually execute meaningful transformation.

Fredrik Reinfeldt’s reflections around humility and openness also connected surprisingly well with many of the organizational discussions happening elsewhere across the summit. The AI era is forcing organizations to rethink not only technology stacks but also leadership behavior itself. Innovation can no longer be managed purely through rigid hierarchies, isolated departments, or highly centralized decision structures disconnected from operational reality. The organizations likely to succeed are increasingly those capable of combining technical ambition with openness to collaboration, continuous learning, cross-functional alignment, and cultural adaptability.

This became especially relevant in the context of the Nordic region, where several attendees reflected on the importance of creating environments attractive to global talent while simultaneously strengthening local ecosystems, industrial collaboration, and knowledge-sharing communities. In many ways, the discussions throughout DIS 2026 reinforced that enterprise AI competitiveness is no longer determined solely by access to infrastructure or capital. It is increasingly shaped by the ability to create cultures where curiosity, experimentation, trust, responsibility, and long-term thinking can coexist.

That human dimension also explains why so many attendee reflections repeatedly returned to words such as community, belonging, curiosity, openness, conversations, and learning. Despite the technical depth of the summit, people consistently described the event less as a technology showcase and more as a place for collective sensemaking around one of the largest transitions modern organizations have faced in decades.

In a market saturated with AI hype, exaggerated claims, and increasingly performative futurism, DIS 2026 stood out because many of the conversations remained deeply grounded in human reality.

The strongest discussions were not about replacing people. They were about helping people navigate complexity, redesign organizations, improve decision-making, build trustworthy systems, and create environments where innovation can actually survive operationally over time.

Perhaps that is one of the most important lessons emerging from the summit overall. The future of enterprise AI will not be determined only by models, compute, or software platforms. It will also be shaped by leadership quality, organizational courage, cultural openness, societal trust, and the ability to empower people to continuously adapt inside an increasingly intelligent and rapidly changing world.

The Human Role Is Not Disappearing. It Is Evolving.

Another particularly important pattern emerging from the reflections was the consistent emphasis on human judgment. Contrary to many popular narratives suggesting AI will simply replace human expertise, the discussions at DIS 2026 pointed toward a different future entirely. Human judgment, domain understanding, contextual interpretation, leadership, and decision-making appear to be increasing in importance precisely because AI systems are becoming more capable. As automation expands, the value shifts toward the humans capable of defining meaning, interpreting ambiguity, applying judgment, and governing outcomes responsibly.

This perspective surfaced repeatedly across discussions involving conversational analytics, multimodal AI, governance, industrial AI, and organizational transformation. Several attendees explicitly noted that the future role of analysts is not disappearing but evolving from dashboard production toward contextual interpretation and business meaning creation. Others described the shift as moving “from execution to judgment.” That framing is especially important because it rejects the simplistic narrative that AI success is purely about replacing labor. Instead, the emerging reality appears far more nuanced. Organizations succeeding with AI are often those empowering humans to work differently, not simply reducing humans from the equation.

Industrial AI and the Return of Real-World Constraints

Industrial AI discussions reinforced this broader operational maturity even further. One of the strongest moments throughout the summit came from Anja Eimer ’s observations regarding Europe’s position in industrial AI. While much of the global AI narrative remains dominated by Silicon Valley and consumer-facing generative AI products, industrial AI introduces fundamentally different requirements involving reliability, precision, safety, physical operations, engineering constraints, infrastructure resilience, and long-term operational trust. Her examples involving Siemens engineering agents, industrial automation systems, and real-world operational environments highlighted that the future of enterprise AI will not only be determined by conversational interfaces but also by how effectively AI systems integrate into complex physical and industrial processes.

This also validated one of the most important strategic shifts visible throughout the summit: the movement away from technology-first narratives toward business-first transformation. Many attendee reflections independently echoed the summit’s broader positioning around measurable value, operational impact, workflow integration, ROI, governance, and adoption. The market increasingly appears exhausted by innovation theater disconnected from operational outcomes. Organizations are no longer asking whether AI is interesting. They are asking whether AI can be trusted, operationalized, governed, scaled, and connected to measurable business value.

The Maturity Signal Before and After DIS 2026

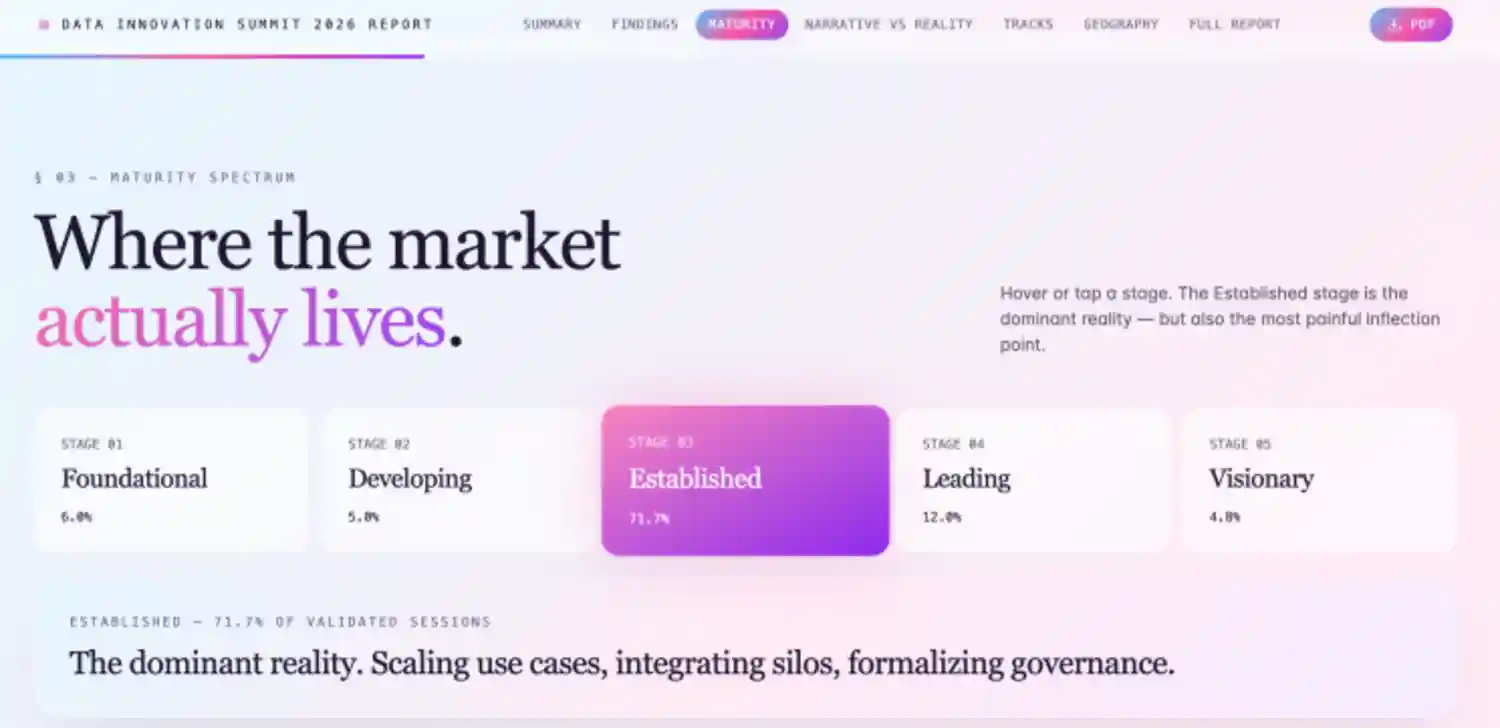

What makes many of these observations particularly interesting is that they strongly validate the findings from Hyperight’s Data Innovation Summit Data & AI Transformation Maturity Report 2026 published ahead of the summit. The report, built on thousands of data points across speaker submissions, enterprise assessments, agenda validations, vendor positioning, and delegate insights, already pointed toward a market entering a transition from experimentation into operationalization.

One of the strongest findings from the report was that the enterprise market is active, ambitious, and increasingly capable, yet structurally constrained in execution. Reading through the attendee reflections after DIS 2026, it becomes remarkably clear how accurately that reality manifested across the conversations happening during the event itself.

Before the summit, the maturity analysis identified several dominant patterns shaping the market: organizations shifting focus from experimentation toward operational value, increasing urgency around governance and AI readiness, growing concerns around fragmented architectures and ownership, and a widening realization that AI success depends far more on operational integration than isolated technical capability.

After the summit, the attendee reflections validated those findings almost point by point.

The discussions around semantic layers, ontology engineering, contextual AI, and trusted business meaning systems confirmed that enterprises are actively searching for ways to operationalize context rather than simply deploy models. The repeated references to governance, transparency, AI inventory systems, lineage, privacy, and trust validated the report’s conclusion that governance is no longer viewed as optional overhead but increasingly as a prerequisite for scalable enterprise AI adoption.

Equally important was how strongly the post-event reflections reinforced the report’s observations around organizational maturity. The maturity assessment had already indicated that many enterprises were struggling less with access to AI technologies and more with operational alignment, change management, ownership clarity, and execution capability. Across the attendee testimonies, this exact pattern appeared repeatedly. People continuously returned to themes such as workflow redesign, business ownership, organizational fragmentation, AI literacy, cultural adaptability, operational trust, and leadership responsibility.

Even the emotional tone of the summit reflections aligned closely with the report’s broader conclusions. The maturity analysis suggested that the market was beginning to move beyond AI fascination into a more sober operational phase where measurable outcomes, adoption, governance, integration, and business value matter more than hype. That exact shift became highly visible throughout the post-event discussions. Very few attendees focused on novelty for novelty’s sake. Instead, most reflections centered around operational realities, practical implementation, organizational learning, and sustainable transformation.

What makes this moment novel is that the same maturity signals we identified before the summit through structured research were independently confirmed after the summit through the language of the community itself.

Perhaps most fascinating was the convergence between the maturity data and the human observations emerging from the summit itself. The report identified that one of the largest barriers to enterprise AI maturity was not infrastructure but learning. Not simply technical learning, but organizational learning. Reading the attendee reflections afterward, it became increasingly obvious that the market is collectively trying to answer the same question: how do organizations redesign themselves to operate effectively in an AI-native world?

That may ultimately be the real value of gatherings like DIS 2026. Beyond the stages, technologies, workshops, and announcements, the summit became a real-time reflection of enterprise AI maturity itself. A snapshot of an industry moving away from isolated experimentation and toward the much harder work of operational transformation.

The maturity report identified the signals before the event.

The attendee reflections confirmed them afterward.

And together, they tell a remarkably coherent story about where enterprise AI is actually heading next.

Why DIS 2026 Felt Different

Perhaps most importantly, DIS 2026 revealed that the market is beginning to mature emotionally as well as technically. Beyond the discussions around architecture, governance, and agents, attendee reflections repeatedly emphasized curiosity, conversations, community, trust, belonging, learning, and meaningful exchange. The event itself was often described as energetic without being superficial, serious without being sterile, and ambitious without collapsing into hype. That balance matters because the enterprise market is increasingly searching for environments capable of supporting honest operational discussions rather than exaggerated promises detached from reality.

In many ways, the strongest signal from DIS 2026 was not simply technological but philosophical. The industry appears to be rediscovering something deeply important:

- intelligence without context is fragile,

- automation without governance is dangerous, and

- innovation without operational alignment rarely survives contact with reality.

AI is forcing organizations to confront foundational questions about meaning, ownership, trust, responsibility, workflows, and decision-making structures in ways few previous technological waves ever demanded.

The organizations that succeed in the next phase of enterprise AI will likely not be those chasing every new model release or building the loudest demonstrations. They will be the organizations capable of combining strong foundations, contextual understanding, governance maturity, operational clarity, and human judgment into coherent systems that AI can actually operate within responsibly. That is a much harder challenge than deploying another assistant or chatbot, but it is also where the real long-term value will emerge.

If DIS 2026 revealed anything clearly, it is that enterprise AI is no longer entering its experimentation phase. It is entering its context era.

Thank you for visiting Stockholm! Thank you for visiting the Nordics! Thank you for visiting the Data Innovation Summit!