Lately, there has been a score of initiatives to put the rein on the artificial intelligence technology racing ahead. Government leaders, business leaders, academics have grasped the pressing need for ethical guidelines and framework surrounding the use of AI.

The European Commission’s Ethics Guidelines for Trustworthy AI is one of them, which present an important step towards creating AI technologies that people and business can trust and that can improve people’s lives.

We can have guidelines in place, but the main questions remain: How we can ensure compliance and adherence to these rules for safe use of AI methods in companies. And ultimately, how will this compliance impact the business?

Patrick van der Smagt, Head of AI Research at Volkswagen Group, will elaborate on these and related questions during his Keynote presentation on Ethical and Trustworthy Artificial and Machine Intelligence at the Data Innovation Summit 2020. In this interview with Patrick, we scratch the surface of the profound topic of ethical and trustworthy AI.

Hyperight: Hi Patrick, it’s a pleasure having you as one of the Keynote speakers at the 5th edition of the Data Innovation Summit. Please tell our readers a bit about yourself and your role in Volkswagen Group.

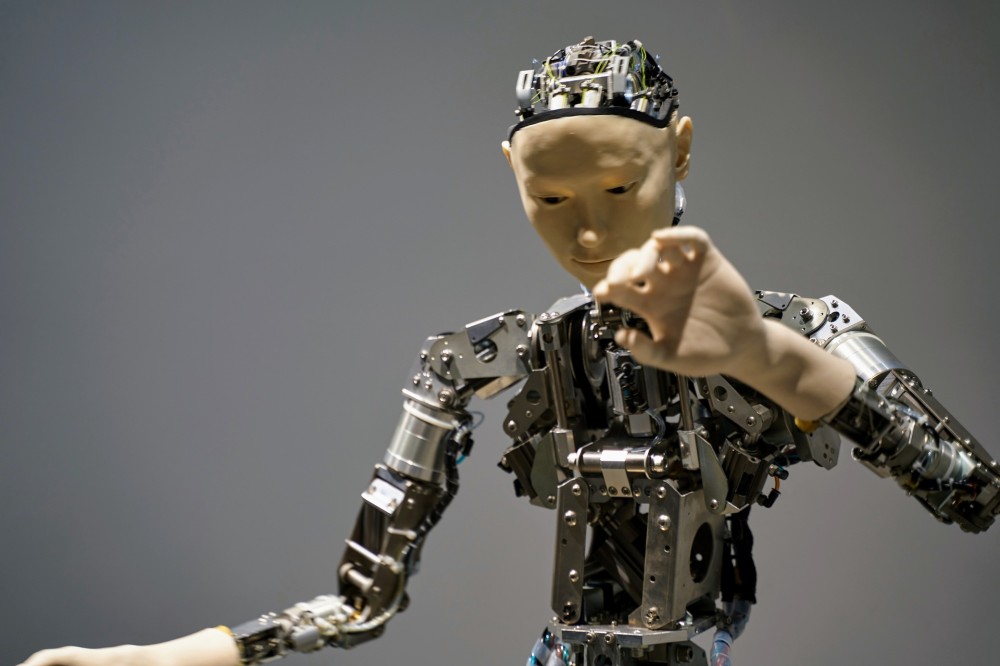

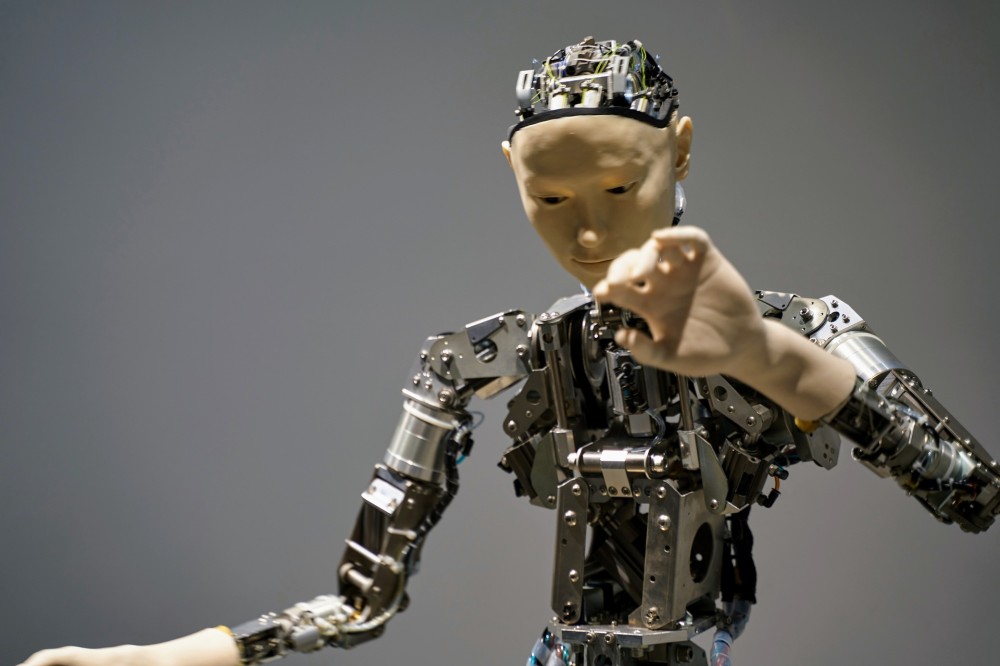

Patrick van der Smagt: I’ve been doing research on machine learning my whole professional career. I started with my master’s on neural networks for character recognition in the late 1980’s; my PhD in the 90’s on neural networks and robotics; and then went on in research around machine learning, robotics, brain-machine interfaces, and such topics.

A few years ago, I left my professorship in Munich for corporate research at Volkswagen, still focusing on fundamental research, and academic-like open output and collaboration models. And I am much enjoying the widened impact corporate fundamental research has. We don’t “just” write papers, but we can mature our machine-learning methods to work on real data.

With the success of AI as it is, fundamental research and deployment must talk. I feel we have not even seen the tip of the iceberg of what’s possible with machine learning, but I think we’re going in the right direction with the investment in research that we see. But, don’t expect that we’ll have artificial general intelligence in the next few years, or else you’ll be much disappointed. Or relieved, for that matter?

Hyperight: You are doing to deliver a presentation on the subject “Ethical and Trustworthy Artificial and Machine Intelligence” in which one of the points is the use of facial recognition. Recently, news surfaced that the European Commission is considering a temporary ban on facial recognition in public places as one more effort for regulating AI. What is your take on this? How will it potentially affect business?

Patrick van der Smagt: As a scientist, I enjoy just trying things and seeing what happens. It’s very elucidating; but not principled enough for a thorough analysis. And, once this goes large-scale, one needs to think twice. We can’t be too cautious with the societal impact of new technology. Luckily, Europe is not known for shooting very quickly, but rather acting and reacting cautiously.

For this reason, we need to act now and set up a regulatory path to decide, for each of these new technologies, when and where we can safely use them, and when to be cautious. Face recognition is a good example: lest the data remain local, most people will think it’s a great technology to unlock a mobile device. But the same people will probably not like to have their face tracked in a supermarket to receive targeted commercials – or worse. The technology is not to blame, but its use must be differentiated w.r.t. privacy, explainability, and so on.

We must act now, and for that reason, I have started a Europe-wide initiative, involving industry and academia, on laying down accountable rules for the use of AI. This initiative takes the EC’s Ethics guidelines as a starting point and will create and put in place a regulatory framework on how to safely use AI methods in companies.

Hyperight: Before this, we witnessed the publication of the EC’s Ethics guidelines for trustworthy AI. But with the challenges related to the lack of a clear definition of AI and lack of AI explainability, how can we ensure full compliance?

Patrick van der Smagt: “Full compliance” remains a goal, and we need to put processes in place which can flexibly investigate ethical use, but also research, of course, on algorithms.

Hyperight: On the other hand, McKinsey Global Institute has collected about 160 cases of AI’s actual or potential uses for the noncommercial benefit of society, which are aligned with United Nations SDGs, which is also one of the points of your presentation. What are the social domains where AI can have an instrumental role in advancing human life?

Patrick van der Smagt: Where not? One sees that there often is a focus on issues in developing countries, but Europe itself is threatened by energy, transportation, gender, and other issues—and these issues are global.

For these, AI is just a tool. It can be a good tool, but a tool without someone knowing how to use it is, currently, still quite useless. So, the responsibility lies with the people who use the tools, to advance our society in something more inclusive and open.

Hyperight: And lastly, where do you see this battle to regulate AI on the one extreme and put it into use for social good? Where will it take us?

Patrick van der Smagt: Regulating AI is not a battle; it is an opportunity. Since it gives us, for the first time in history, a set of tools that can measurably reduce bias. I don’t see many people disagreeing on the necessity; we need to get consensus on how to go forward efficiently. And, interestingly enough, it’s also an opportunity for research. Take GDPR as an example. Even if one may criticise how it was done, GDPR is very important for us citizens. And it feeds machine learning research, e.g. related to differential privacy. And what about explainability in machine learning? That is also a topic which is close to probabilistic inference if one thing of it. Exciting times.

Add comment